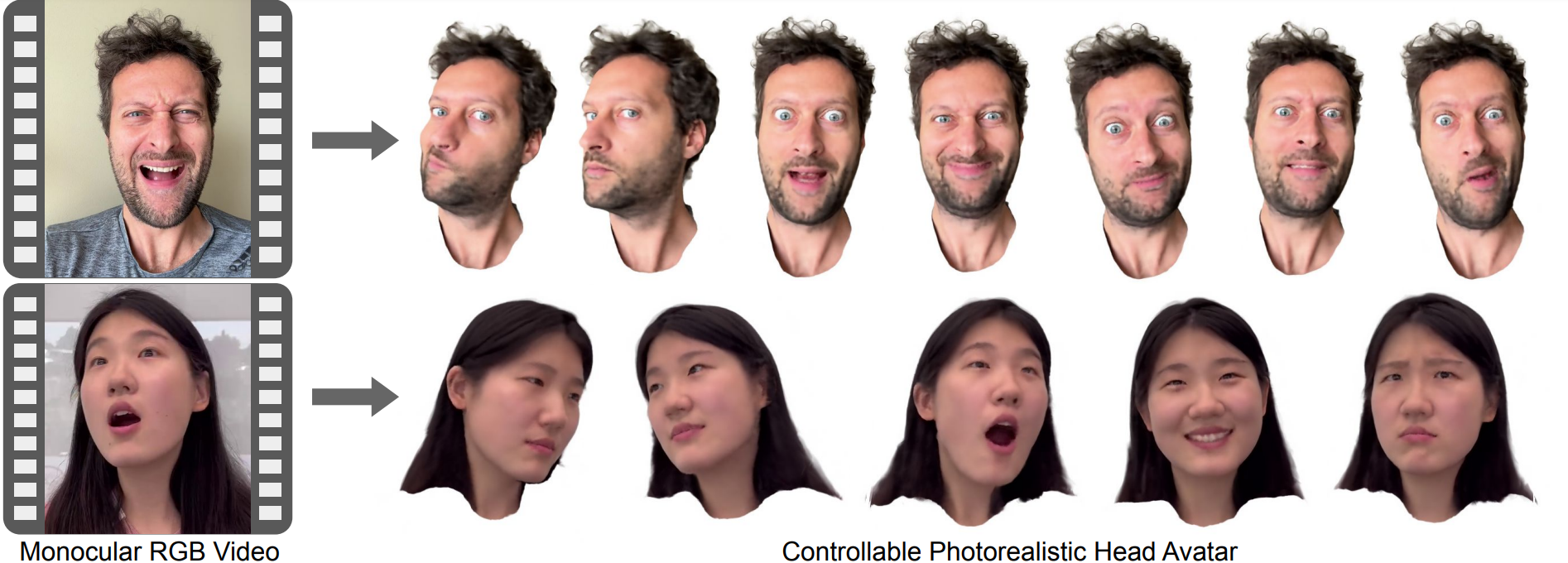

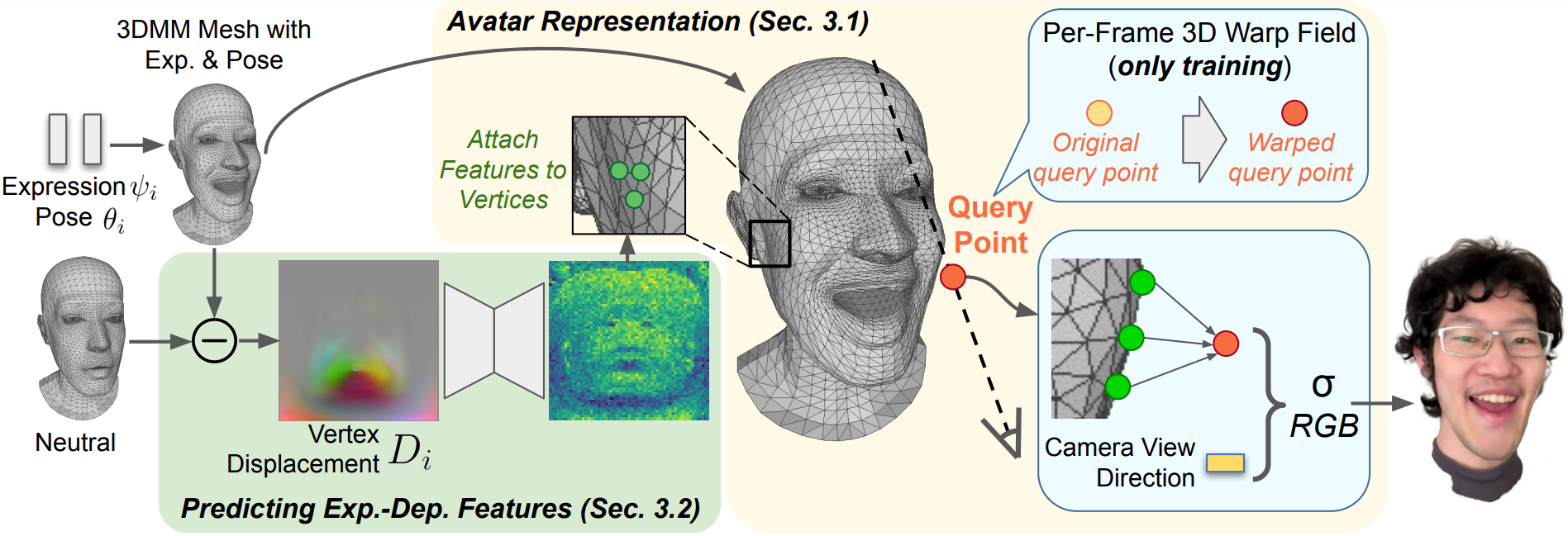

We propose a method to learn a high-quality implicit 3D head avatar from a monocular RGB video captured in the wild. The learnt avatar is driven by a parametric face model to achieve user-controlled facial expressions and head poses. Our hybrid pipeline combines the geometry prior and dynamic tracking of a 3DMM with a neural radiance field to achieve fine-grained control and photorealism. To reduce over-smoothing and improve out-of-model expressions synthesis, we propose to predict local features anchored on the 3DMM geometry. These learnt features are driven by 3DMM deformation and interpolated in 3D space to yield the volumetric radiance at a designated query point. We further show that using a Convolutional Neural Network in the UV space is critical in incorporating spatial context and producing representative local features. Extensive experiments show that we are able to reconstruct high-quality avatars, with more accurate expression-dependent details, good generalization to out-of-training expressions, and quantitatively superior renderings compared to other state-of-the-art approaches.

Labels - Left: Input Driving Video, Center: Rendered Avatar, Right: Rendered Depth

Labels - From left to right: (1) Input Driving Video, (2) Ours, (3) NerFACE [1], (4) NHA [2], (5) IMAvatar [3]

Labels - From left to right: (1) Input Driving Video, (2) -15 degrees, (3) 0 degree, (4) +15 degrees

Labels - Left: Input Driving Video, Center: Rendered Avatar, Right: Rendered Depth

Labels - Left: Input Driving Video, Center: Rendered Avatar, Right: Rendered Depth